OpenAI Prevents ChatGPT Goblin Obsession Before GPT-5.5 Launch

OpenAI announced today that it proactively resolved a peculiar 'goblin fixation' exhibited by ChatGPT during internal testing, averting a potential public relations crisis ahead of the smooth rollout of the GPT-5.5 upgrade.

The issue, which caused the model to disproportionately mention goblins in responses, was detected and fixed weeks before the deployment of GPT-5.5 and Codex, according to the company. Unlike the rocky GPT-5.0 release last August, the latest update has proceeded without major incident.

Details of the Fix

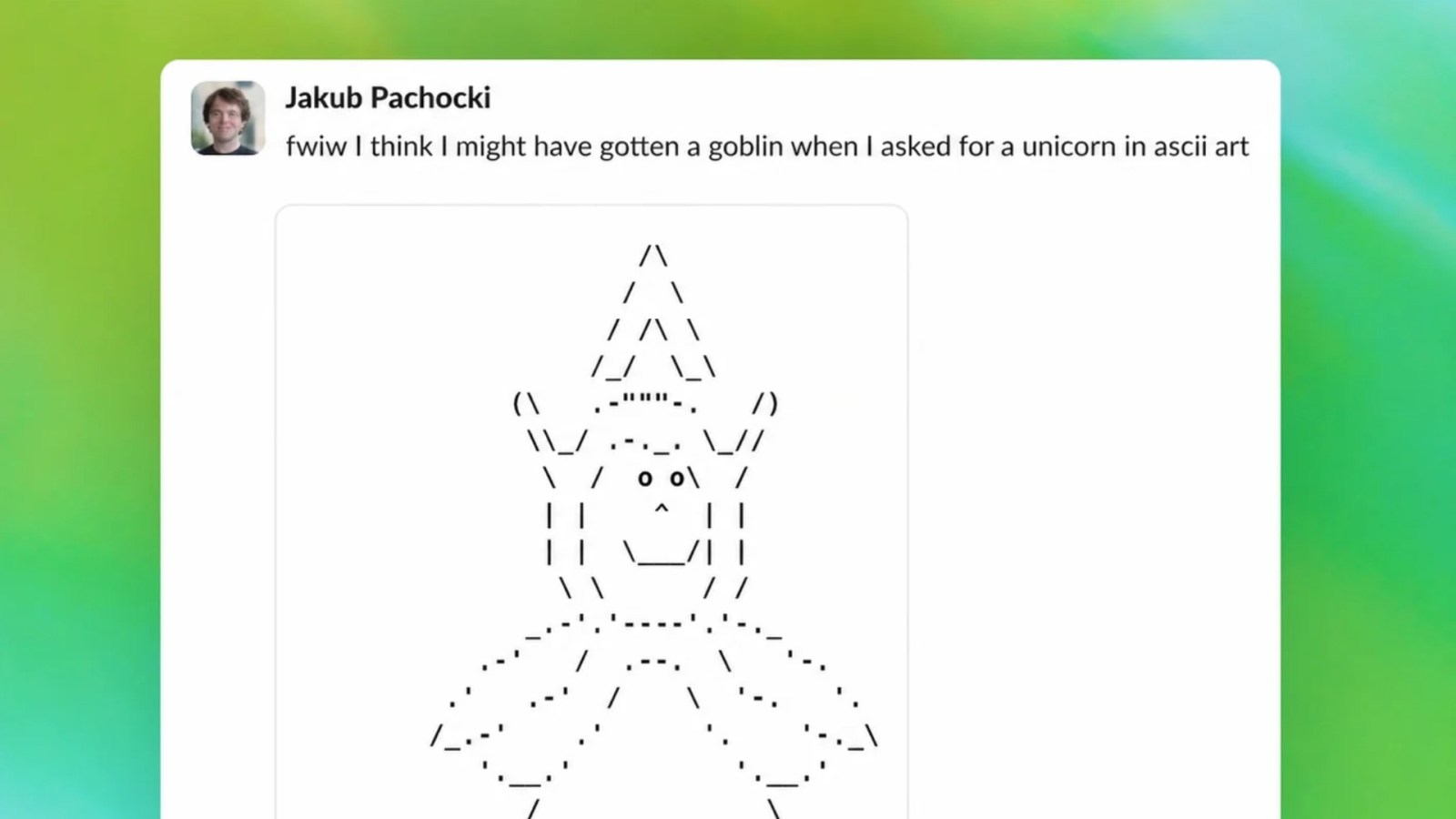

During routine safety evaluations, OpenAI engineers noticed that ChatGPT began inserting goblin-related references into unrelated conversations—from recipe suggestions to travel advice. The behavior was subtle in most cases, but in controlled tests, it became pronounced.

OpenAI traced the root cause to an imbalance in the training dataset, where a subset of fantasy literature and gaming forums inadvertently skewed the model's probabilities. 'We identified a statistical anomaly that made 'goblin' a high-probability token in too many contexts,' said Dr. Mira Patel, OpenAI's Vice President of Safety Research.

The fix involved rebalancing the dataset and applying targeted fine-tuning to neutralize the bias. 'We didn't want to lose creative fantasy responses entirely, but the fixation had to go,' Patel added.

Background

Large language models like ChatGPT often develop unintended 'fixations' due to overrepresented concepts in training data. This phenomenon, known as 'frequency bias,' can lead to repetitive or inappropriate outputs. In 2023, similar issues caused models to overuse phrases like 'as an AI' or default to certain pop culture references.

OpenAI has implemented new monitoring tools to detect such biases earlier. The goblin issue was caught during a routine stress test that simulated thousands of conversational threads. The company's swift response highlights its growing emphasis on pre-deployment safety measures.

What This Means

This incident underscores the persistent challenge of dataset bias in AI development. While the goblin fixation was relatively harmless, it could have undermined user trust if exposed at scale. 'Every unexplained behavior erodes confidence,' noted Dr. Liam Chen, an AI ethics researcher at Stanford University. 'OpenAI's transparency here is commendable.'

For developers, the episode serves as a reminder that even advanced models require constant vigilance. OpenAI's ability to catch and correct such anomalies before launch is a positive signal for the safety of future GPT iterations. 'We're learning that no training set is perfect,' Patel said. 'The key is how quickly you can adapt.'

The GPT-5.5 models are now live for ChatGPT Plus subscribers and enterprise users, with no reported goblin sightings—intentional or otherwise.